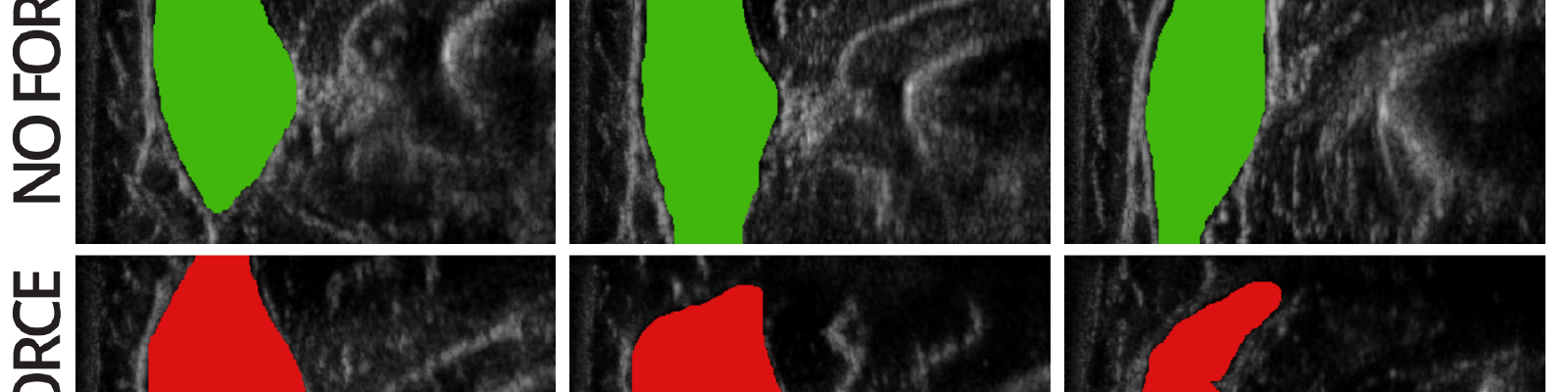

Muscle cross section of the brachioradialis varies substantially, and nonlinearly, across elbow angles and force conditions.

Muscle cross section of the brachioradialis varies substantially, and nonlinearly, across elbow angles and force conditions.

Musculoskeletal Modeling for Physical HRI

Muscle cross section of the brachioradialis varies substantially, and nonlinearly, across elbow angles and force conditions.

Muscle cross section of the brachioradialis varies substantially, and nonlinearly, across elbow angles and force conditions.

Musculoskeletal Modeling for Physical HRI

Abstract

While human kinematics can be measured to a significant extent using motion capture, there does not yet exist a system that can predict human dynamics (including contact forces and joint torques) using non-invasive sensing during manipulation tasks. Such a system would enable the creation of safer, more effective prosthetic and exoskeletal devices and would allow for much more rigorous algorithmic treatment of tasks in physical human-robot interaction. The goal of this research is therefore to create a sensor-driven musculoskeletal model of the human arm that is both a) predictive of kinematics and dynamics during arbitrary manipulation tasks, and b) customizable to individuals, including those with pathological morphology. Towards this objective, I will present preliminary results on how various non-invasive sensing modalities can be used to infer contact forces and joint torques. This work includes the use of magnetic resonance imaging (MRI) to extract relevant morphological parameters, ultrasound to measure muscle cross-sectional area and its relation to exerted force, surface electromyography (sEMG) as a measure of neurological muscle activation, and — perhaps most notably — acoustic myography (AMG), a novel modality that measures muscle vibration, and which we show to be more reliably correlated with force output than sEMG (especially under dynamic conditions). In the future, we hope to use all of the above sensors in concert to perform real-time dynamics inference at a resolution sufficient to analyze and discriminate arbitrary manipulation tasks that occur in daily life.